SpendOps with Azure Cosmos DB

I went on a speaking tour around Australia to deliver my Real-life SpendOps with Azure Cosmos DB talk. My talk covers the basics of Cosmos DB and how to you can get the most out of it by optimizing your queries, choosing the right partition keys, and automating the SpendOps process to ultimately predict how much throughput your application will require, but also predict the future cost of any code changes you make to your application.

The Video

My talk was recorded live at the Sydney .NET User Group (June 2019).

Check out all the other awesome recordings at SSW TV

Credits

Special thanks goes to Adam Cogan, SSW TV, Thiago Passos, Jason Taylor, Matt Wicks, Liam Elliot, Calum Simpson, Jernej Kavka (JK), Camilla R. S. Bronzon and Penny Walker for helping test my content, public speaking advice, and helping arrange the whole tour. It really was a great experience!

SpendOps

As developers, we have a direct cost impact at every stage of the traditional DevOps life-cycle. So much that we can label this the SpendOps life-cycle.

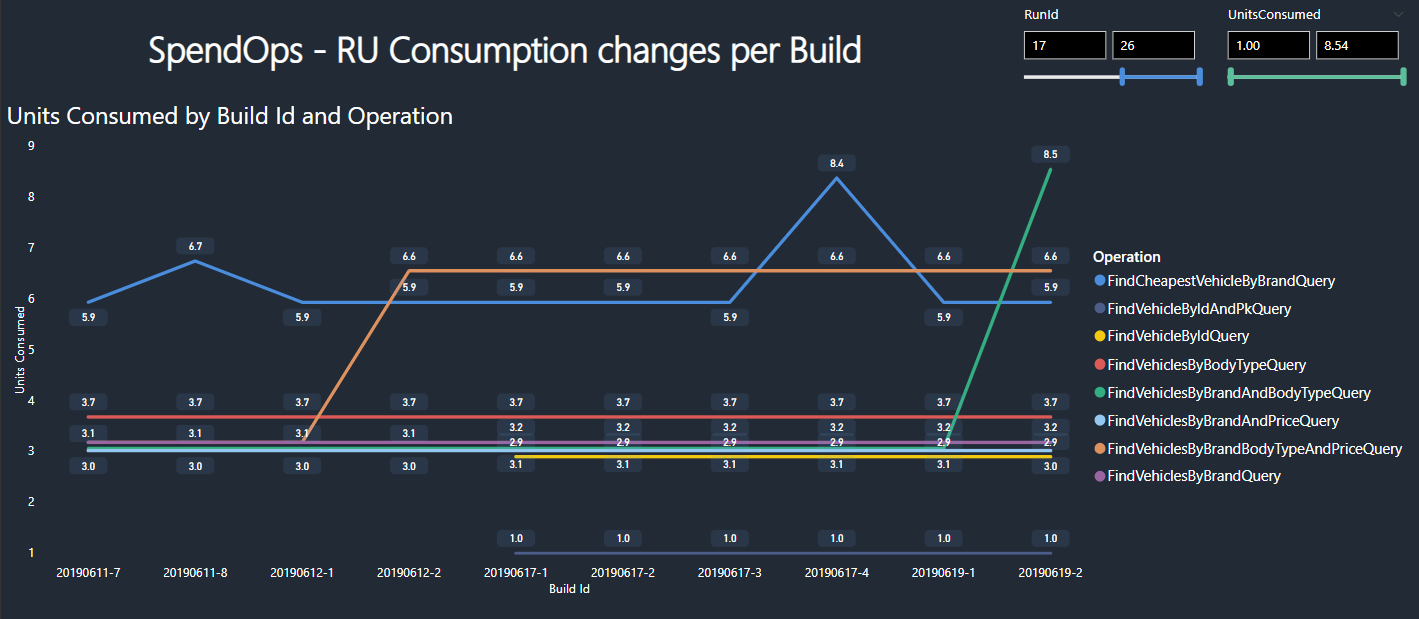

Being able to measure the cost change associated with each code change before releasing our software to production is super important. If a code change reflects a significant cost or required throughput increase we can block the change from being released to Production. Having instrumented our application correctly we can turn regular unit and/or integration tests into Spend Tests.

Regular tests are concerned with Passing or Failing. Perhaps someone also cares about the length of time it takes tests to run and use it as a metric for positive or negative change. Code coverage is also a very popular metric that developers care about when running their tests. With Spend Tests however, we also care about the cost of the test. The cost indicates either the dollar value for that test or the amount of resources required to execute and evaluate.

Whenever a Spend Test indicates that a particular feature or function has a higher that expected increase in cost or throughput requirement in comparison to the previous build, we are dealing with a Spend Bug! Just like traditional bugs, we can and should log these Spend Bugs in our backlog so that we can make a plan to fix or at very least understand the increase.

Ultimately, having collected the cost measurements via our Spend Tests we can project these measurements onto a Production workload to determine the required throughput and actual cost of our CosmosDB database.

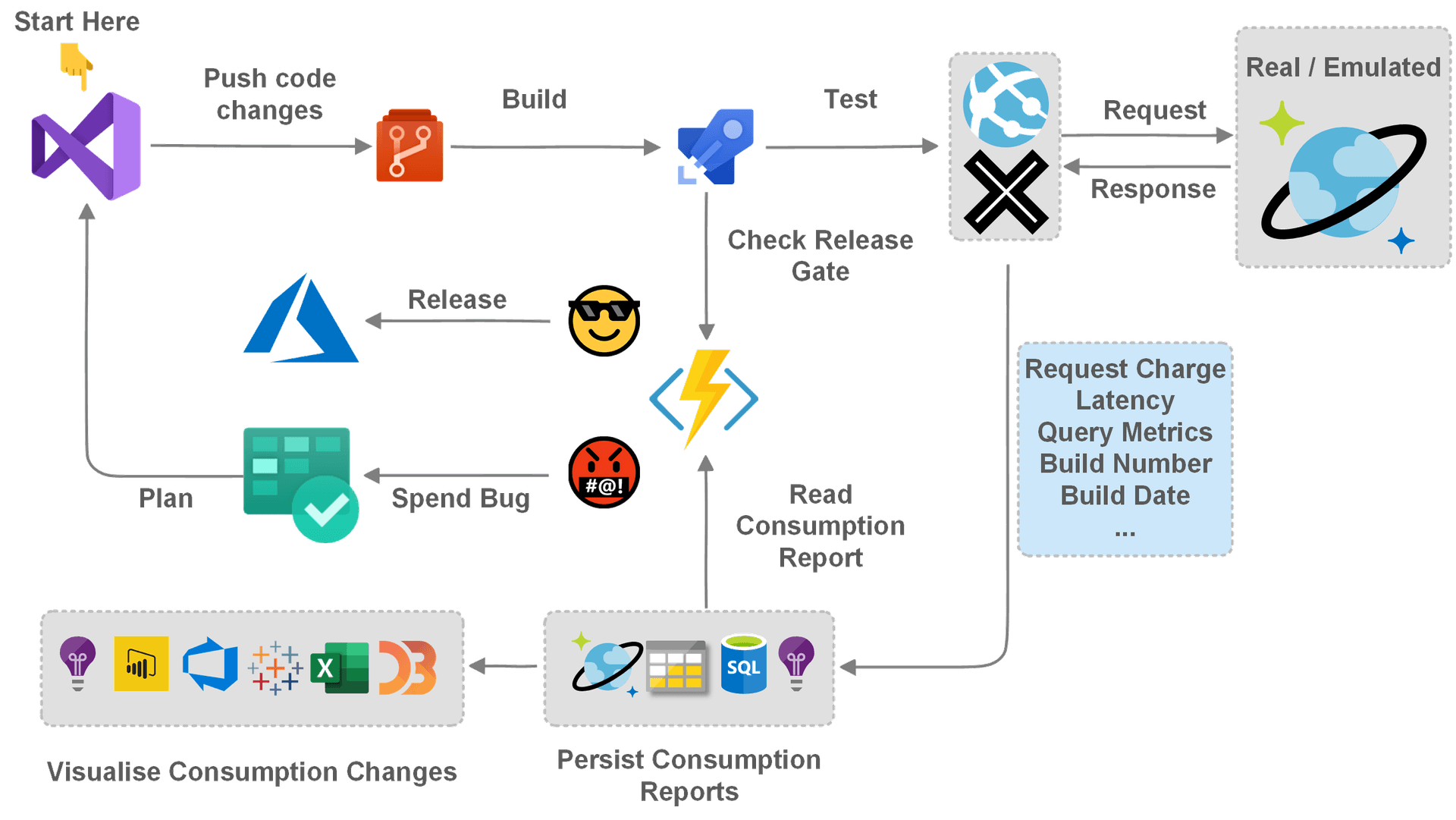

SpendOps Workflow

Here is a simple SpendOps workflow that shows how we can make a couple of minor adjustments to our regular DevOps CI process. Once we commit our code, build the application and run tests, persisting the consumption information is necessary. Once stored, we can query the data and use the result of these queries to form that basis of a Release Gate. Whatever metrics we set however will then determine if a particular build is cost effective enough to be released to the desired environment.

SpendOps Source Code

The PowerPoint slides and Source code for the demo can be found on GitHub and are available as NuGet packages that you can reference in your projects.